In the high-stakes world of mass cytometry, quality control is the unsung hero, ensuring that our cellular symphonies play out in perfect harmony. Let’s explore the critical steps that keep our CyTOF instruments singing on key and our data ringing true.

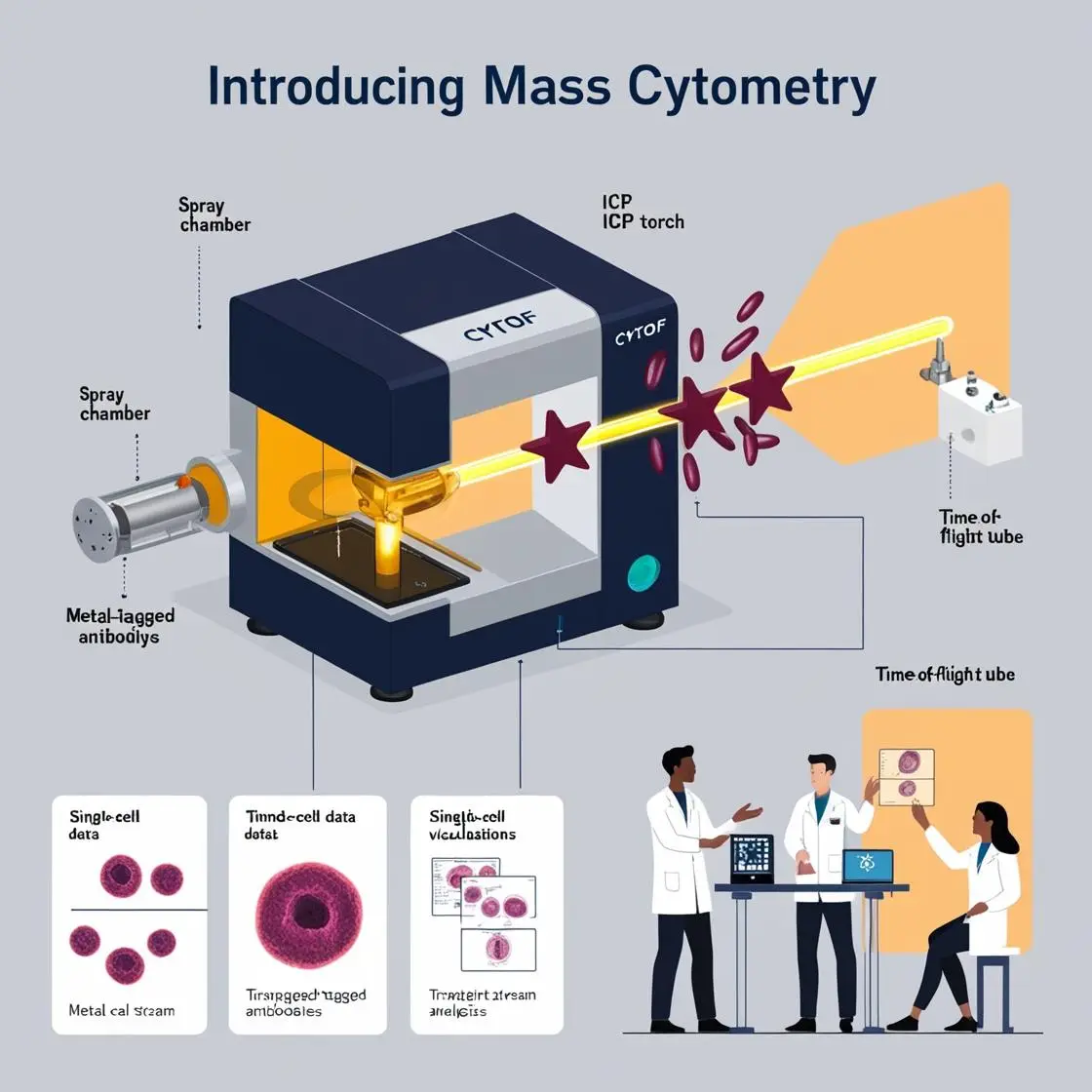

Instrument Calibration and Tuning

Just as a concert pianist must tune their instrument before a performance, a mass cytometrist must calibrate their machine before each run. This process is crucial for ensuring consistent and reliable data acquisition.

The day begins with a ritual familiar to all CyTOF users: the tuning solution. This cocktail of elements spans the instrument’s mass range, allowing for precise calibration of the time-of-flight measurement. It’s like tuning all 88 keys on a piano, ensuring each note (or, in our case, each mass) is precisely where it should be.

An essential advancement in this area comes from the work of Tricot et al. (2015) in “Cytometry Part A.” They introduced a method for daily tuning using a broad range of metal isotopes, which has become standard practice in many labs. This approach ensures the instrument maintains optimal performance across its entire detection range.

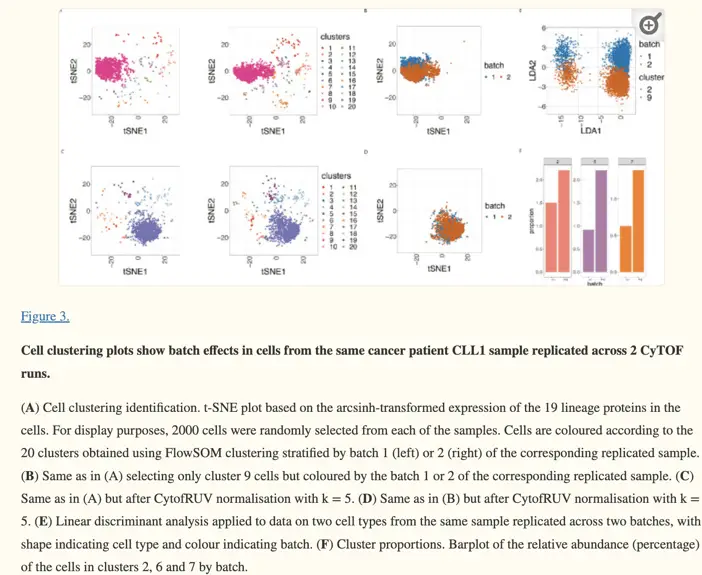

Batch Effect Correction

In an ideal world, every sample would be run under identical conditions. But in reality, experiments often span days, weeks, or even months, introducing the dreaded batch effect – the bane of every mass cytometrist’s existence.

Enter the world of batch effect correction. The landmark paper by Finck et al. (2013) introduced bead standards for normalization. These beads spiked into each sample, act as internal standards, allowing for the correction of signal drifts over time.

But the field hasn’t stood still. More recent papers show now new algorithm optimizing normalization, such CytoNorm, developed by Sofie Van Gassen, Cytometry Part A, 2019. Now, there is even a more recent paper, in 2022, showing a new algorithm, CytofIn, which enables integrated analysis of public mass cytometry datasets using generalized anchors.

Data Normalization Strategies

Normalization is adjusting data to a common scale, ensuring that measurements are comparable across samples and experiments. It’s like ensuring that all the instruments in an orchestra are playing in the same key.

The bead-based normalization method introduced by Finck et al. (2013) remains a gold standard in the field. In this approach, beads containing known quantities of metal isotopes are added to each sample. The signal intensities from these beads are used to normalize the cellular data, correcting for variations in instrument sensitivity over time.

A cutting-edge approach comes from the recent work of Trussart et al. (2020) in eLife. Their paper, “Removing unwanted variation in mass cytometry data with CytofRUV,” introduces a method that combines both bead-based normalization and computational correction. This hybrid approach promises to deliver more robust and accurate normalization, particularly for large-scale studies involving multiple batches or centers.

The Importance of Quality Control

The significance of rigorous quality control in mass cytometry cannot be overstated. A cautionary tale from a colleague illustrates this point perfectly. In a large multi-center study, one site consistently produced data that didn’t align with the others. After weeks of troubleshooting, it was discovered that their tuning solution had been contaminated, subtly skewing all their measurements. This incident led to the implementation of stringent quality control measures across all sites, including regular proficiency testing with standard samples.

Another critical aspect of quality control is the use of biological controls. Including samples from known healthy donors or cell lines in each run provides a benchmark for assessing the consistency of staining and instrument performance. As one researcher put it, “Our healthy control samples are like our canaries in the coal mine – if they look off, we know something’s not right with the experiment.”

In conclusion, quality control in mass cytometry is a multi-faceted endeavor, encompassing instrument calibration, batch effect correction, and data normalization. From the daily ritual of instrument tuning to sophisticated computational corrections, each step plays a crucial role in ensuring the reliability and reproducibility of our data. As mass cytometry continues to push the boundaries of single-cell analysis, these quality control measures serve as the bedrock upon which groundbreaking discoveries are built. They may not be the most glamorous part of mass cytometry, but they are undoubtedly among the most important.

Quality control in mass cytometry is like being a meticulous orchestra conductor, ensuring every instrument plays in perfect harmony. During my PhD at LUMC, I became a maestro of consistency, conducting a symphony of cells across two CyTOFs. My secret weapon? Quality check samples – cellular spies scattered throughout my experiments like vigilant sentinels. Each day, these identical samples would sing their molecular song, allowing me to detect even the slightest off-key note in the CyTOF's performance. Running samples on both machines simultaneously was my scientific stereo system, a "trust but verify" approach that would make even the most paranoid researcher proud. It was exhausting, yes, but in the high-stakes world of mass cytometry, this obsession with quality control saved my data more times than I care to admit. After all, when you're dealing with machines that can be as temperamental as divas, it pays to be prepared. In the end, it's not paranoia if the CyTOFs really are out to get you.

Guillaume Beyrend